Hi,

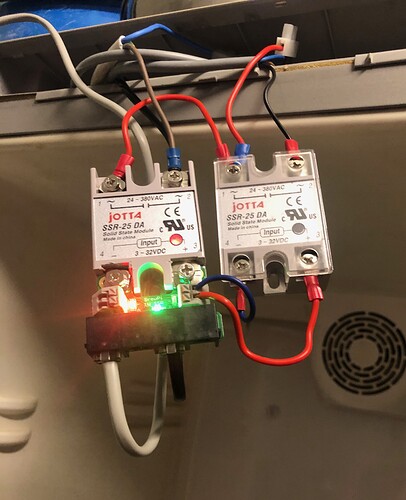

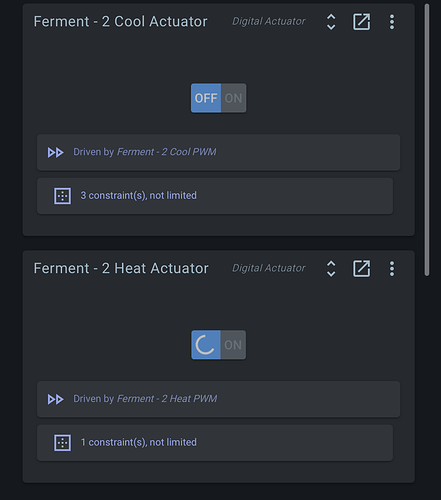

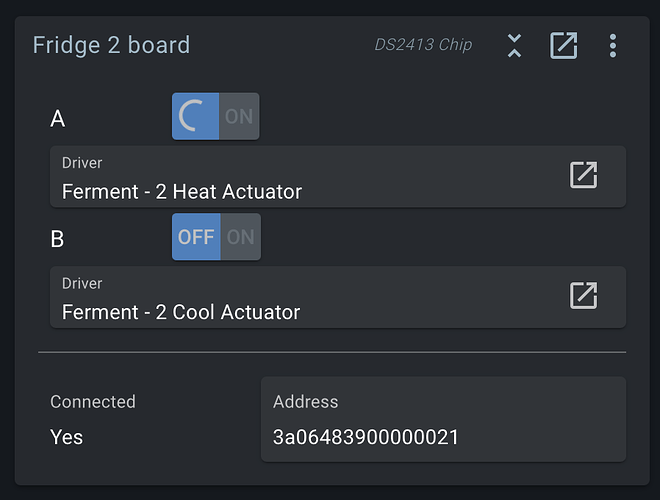

I have a problem with one of my fermenting fridges in that channel A of the expansion board appears to be locked on. (used for the heater) I have swapped out the SSR but no change. Even when cooling is required channel A stays on. Defective board?

The block for the actuator shows a spinning icon on channel A.

Could you please run brewblox-ctl log? The most likely root cause is the cabling, and then we’d expect multiple connected/disconnected events.

I’m indeed seeing constant disconnects / reconnects of 5 temp sensors and 2 DS2413 boards.

This can be caused by long cables laying next to each other, or a single cable or device being faulty, and causing issues for the OneWire bus.

For feedback, you can check the logs yourself with docker-compose logs --follow spark-one. The connect / disconnect messages are self-explanatory, and the UI blocks show the hardware address mentioned in the logs.

Thanks for the feedback Bob. Will have a look. But just for info the three fridges are “daisy chained” from the Spark, fridge 2 is the last one. All has been working with no problems for a while. I have plugged fridge 2 into fridge 3’s place (which is functioning ok) and it continues to display the same problem. Not sure how it could be a wiring issue.

Just to rule out the wiring I have tried a new patch lead direct from the Spark to the expansion board, same problem.

How much length in total do you have?

How is it arranged?

The board could be bad. If you take out this board, do the disconnects in the logs disappear?

Hi,

Three fridges,

1 is connected to the spark via it’s expansion board with approx 4m of cat5 cable (6 stands used)

3 is connected to 1 via the expansion boards with approx 4m of telephone cable (4 stands)

2 is connected to 3 via the expansion boards. - ditto-

I’ve had another unplugging /plugging in with the wiring this evening, no change, currently the affected board of fridge 2 is disconnected.

Immediately unplugging / replugging will not show much if the problem is that a single device is having trouble and causing knock-on effects on its neighbours. You’d want to plug in devices one by one over the course of a day, and see when the disconnect / reconnect messages start up again.

I do see two underpower warnings in your log. This may be the cause, or it may be nothing. How are you currently powering your Pi and your Spark? (USB or separate power feed for Spark, what kind of voltage / amperage on the Pi adapter?)

There’s also some docker network related warnings. You may want to double check settings by running brewblox-ctl enable-ipv6.

Thanks again for the feedback,

The Pi is powered by a 2A usb adapter, the Spark is powered via USB from the Pi.

Is there not a objective test that can be done to prove the expansion board?

After running the “brewblox-ctl enable-ipv6” I was locked out from connecting to the Spark.

The recommended power supply for a Pi 3 is 2.5A, so this may be the issue, especially when also powering Spark + peripherals.

You’ll need to powercycle to reconnect to the Pi if so locked out.

Ok I have now connected the original power supply to the Spark, so the Pi has its own. After reconnecting the problem board, which initially caused the Spark to blank the temp displays and stop fridge 3 from heating, all seems ok. Bit odd when all three fridges were working previously.

Anything of interest here?

https://termbin.com/yk74

Been running for the last 24hrs.

Thanks again.

Reconnect logs disappeared entirely starting ~24h before the ctl log was made, so it appears this was the problem.

Thanks Bob but why all of a sudden when it has been all ok from day one? And why only fridge 2, last on the chain ?

At a guess, the system was hovering just above a tipping point before, and some change pushed it over the edge, where the issue became noticeable.

This change really could have been anything: even something as simple as you adding a new graph (more history queries, more Pi power consumption).

Thanks Bob, it’s all a black art to me, I can follow instructions and connect stuff but the bit in-between is for … the clever folk.

I can’t fault the feedback and help I have received over the years, thanks again.