Hi all,

I’m slowly easing myself into “brewing properly”. I’ve been at it for years with malt kits and a bucket but have decided I want to do this properly.

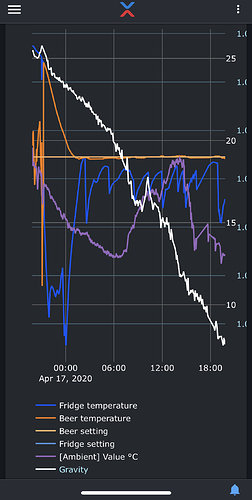

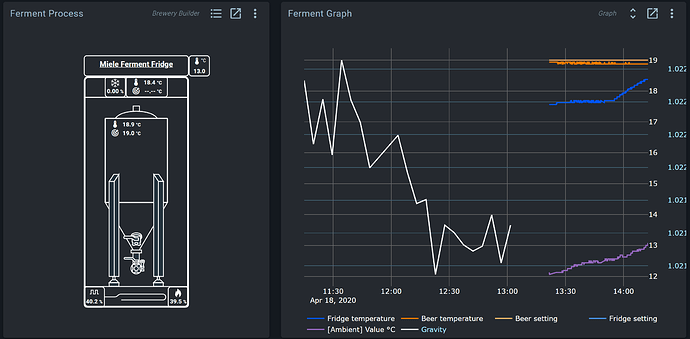

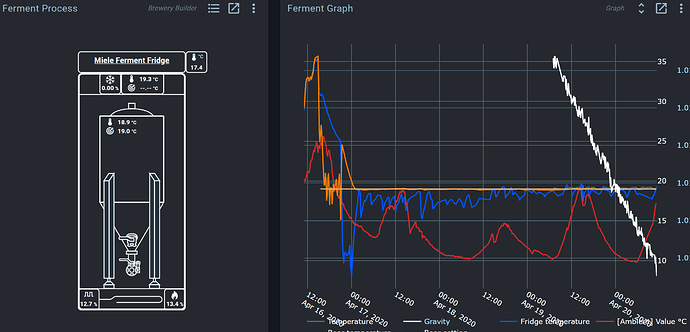

My lockdown project was getting a fermentation fridge setup, I’ve built myself one and have it working well. I have also put together a iSpindel which is also now calibrated and working.

Ok so my basic questions:

-

I’m having some trouble getting the spark and the iSpindel to work over WiFi simultaneously. I get one working and the other is temperamental. This is fixed in the short term by plugging in the USB and I’m going to go though the instructions to tell the Pi exactly where is spark is on the network but before that I would like to know if it’s possible that they see interfering (both using :5080 or something)

-

Is it possible to display data from the iSpindel on the spark? I’d love to see gravity on the screen.

-

I made my first really rookie mistake. I simply swapped the inputs to chance the temp prove channel, obviously this didn’t work as it’s looking for the ds18b20 address (ended up pitching at way too high a temp) I eventually swapped the channels in the Fridge dashboard by copying the sensor ID from one variable to another - Is there a simpler way I’ve missed?

-

Next step is a kegerator before I build the brew day equipment. Is it possible of flash a photon with the firmware and just connect a 1 wire sensor? A full Spark for this seems overkill!

Thank you all in advance for putting up with my daft questions! Sorry about the long post and have added some photos of my first build. I am very much enjoying myself.

The iSpindel and spark have their own devices, and thus shouldn’t suffer from port conflicts.

How are they temperemental?

@bdelbosc built a plugin for the iSpindel. https://github.com/bdelbosc/brewblox-ispindel/blob/develop/README.md You can use the Metrics and Graph widgets to then show the collected data on a dashboard.

If you already set up the iSpindel service, and have trouble getting that to connect, feel free to run brewblox-ctl log, and post the result url.

You can always reconfigure blocks and their links. Swapping addresses will work, but you can also edit the setpoint block to use the other sensor. Swap the sensor block names to preserve graph config, and you’re good to go.

To see which blocks are using a sensor, go to the Spark service page, and toggle the relations view.

Depending on your setup, you can control/monitor your kegerator with the same Spark. I believe you need to place the Photon on a Spark v2 board for it to function, but I don’t know whether any old v2 boards are still around.

Don’t worry about asking questions! We added a lot of improvements based on people asking questions. I think a sensor swap functionality is somewhere on the backlog as well, but I’d have to check.

I really do like how you handled the cabling in the fridge door. Is that a hose at the bottom, or how does that work with closing the door?

Thanks for the reply.

I have the iSpindle integrated and the service running. Its currently logging fine with the spark plugged in. Before I had the iSpindle the Spark was rock solid over WiFi, now when i went to use both the iSpindle dropped out for hours, ran it over serial and its connecting to the Wifi fine with good signal and uploading to the correct address. After a couple of reboots and faf (increased the report interval to 5mins from 30) the iSpindle runs well over WiFi but the spark drops in and out.

A few attempts at this with similar results, I will attach a log. Both have static IP’s. Log here:

https://termbin.com/x4ku

Works fine with the USB, I’m going to try assigning the spark ID to the controller using add-spark with the dedicated IP.

And thank you =-) I am quite pleased with the fridge, used corrugated electrical conduit for the door close mechanism. Spent some time trying to integrate drag chain but as the door opens past the 180 degree mark I couldn’t get it to work. I still need to do a little bit of cable management on the front. Straight power connectors are on order!

Your log shows that the Spark suddenly can’t be discovered anymore using mDNS.

It’s possible that the iSpindel somehow interacts with mDNS, but it’s very hard to say. mDNS as a protocol can be fickle. My suggestion would indeed be to assign a static DHCP lease to the spark, and then run

brewblox-ctl add-spark --name spark-one --force --device-host 192.168.0.15 --device-id 4E0038000851353532343835

Hi Bob,

All devices are already static, I will run the add-spark this evening and see if it helps. The sudden bit is probably the point where the usb is disconnected to make the log.

I’ll let you know if it works

Cheers.

So far so good thank you, had both connected for the last hour. So I know for future does the —force make the spark connect over WiFi only or will it still accept usb?

Many thanks! It’s happily fermenting away and I’m pleased as punch (well pleased as IPA)

The --force argument allows you to override an existing service in the compose.yml.

Wifi/USB is set by --device-host or --discovery flags. See https://brewblox.netlify.app/user/connect_settings.html#device-host for more info.

Enjoy your IPA =)

Ah the woes of intermittent faults!

Afraid its back and dropping out again, as its so intermittent i’m not convinced it has anything to do with the iSpindel. Comes back every half an hour or so and its back-filling data well so its working fine.

Had a look through the logs but don’t really know what I’m looking for. Any advice would be appreciated.

https://termbin.com/mfwa

I’m seeing quite a lot of network interrupts, also in other services.

Docker seems to have issues with ipv6, so it may be helpful to disable that on your Pi.

brewblox-ctl down

echo "net.ipv6.conf.all.disable_ipv6 = 1" | sudo tee -a /etc/sysctl.conf

echo "net.ipv6.conf.default.disable_ipv6 = 1" | sudo tee -a /etc/sysctl.conf

echo "net.ipv6.conf.lo.disable_ipv6 = 1" | sudo tee -a /etc/sysctl.conf

sudo sysctl -p

# Check results - this command should print "1"

cat /proc/sys/net/ipv6/conf/all/disable_ipv6

brewblox-ctl up

Edit: added down/up commands

Thank you, had disabled ipv6 but still have some connectivity issues. (Been up 30 or so mins now and mostly disconnected).

New log: https://termbin.com/nn3x

Your history service has stopped constantly reconnecting, so it seems to have helped somewhat.

Your service initially connects to the Spark, and then loses connection. Further connection attempts fail.

This may be network-related, or an issue on the Spark.

If you go to 192.168.0.15 in your browser, does the Spark respond? It should show a short message that includes its device ID.

Beyond that, you can try connecting the Spark over USB to do some troubleshooting. You’ll have to run the add-spark command again, without the --device-host 192.168.0.15 flag.

After connecting, could you please export blocks from the spark service page (action menu, top right)? It may be a memory issue. We’re in the process of finishing up a release that includes fixes for that.

Hi, so I’ve let it run for a few hours and its undergone a swap. The Spark is now solidly connected over WiFi after the Spindel dropped out. I attached the exported blocks.

brewblox-blocks-spark-one.json (6.6 KB)

Blocks seem completely fine. The conflict between Spark and iSpindel is rather weird: I’m not aware of them sharing any resources or addresses.

How are you powering your Pi? It’s a long shot, but connectors with too little amperage have been known to cause issues.

Pi is powered by a 3.0A USB supply with a 0.5m fairly high quality micro USB cable. Hasn’t given any under voltage warnings yet, had it plugged into a HDMI for a bit so would have seen the warning.

The spark has a 12V 1A supply built into the fridge via the barrel connector.

I’m going to had a dig around and see if I can find an old router, run it on a different network to compare. Cant give it a go until I have the hydrometer out of the beer tho.

Thanks for your help!

To add to my list of questions. I seem to have lost a load of data from the iSpindel, not sure what happened?

https://termbin.com/jv4k

If you use the graph settings to select the period until 19/04/2020 00:00, do you get iSpindel data?

We automatically select the most suitable downsampled dataset (based on # of points) from influx.

It has happened before that for very patchy datasets, it decided that realtime data (only kept for the last 24h) was more appropriate than downsampled data.

We added some safeguards then to discourage that, but may have to add some hard limit where it will never choose realtime data if start < now - 24h.

Yes, when an end date is set before the data is missing then it displays the old data.

Ok. I’ll add an issue to set an override in the selection algorithm to prevent that.

1 Like